AUTHOR: SARAH

|

| During this R&D period of our project, we have been amassing a treasure trove of ideas and opinions around the experience of the patient narrative. A few weeks ago, we arranged to spend time exploring this area further with the Data Linkage Service User and Carer Advisory Group which was set up to provide Patient and Public Involvement (PPI) for mental health data linkage projects using CRIS data. We had provided the group with some questions in advance mostly to largely suggest some focus for discussion. These were: 1. What does ‘patient narrative’ mean to you? 2. What are your expectations about how and how much of your narrative is captured in the clinical record? 3. If you feel that your narrative is captured sufficiently, what is it that makes it so? 4. Is it important for your narrative to be captured in the clinical record or do you feel that being heard and understood during the consultation is enough? 5. How do you feel about methods to capture the patient narrative such as audio/video recordings? What other means might enhance this process considering, among other issues, ethics, and accuracy? In response to ‘what does ‘patient narrative’ mean to you?’, a group member said that it was their story, their journey of fighting mental illness and fighting the system. Their experience was that of not being listened to particularly around medication and side effects. The shortness of psychiatry consultations meant that the experience is a fire-fighting exercise. Having multiple psychiatrists over your journey can mean that a lot of your personal narrative is lost. We reflected on the fact that this issue of a lack of continuity is important and challenging in a mental healthcare system. What we were hearing was that people had progressed in their journey despite the inconsistent care that has been provided, and that they have developed skills to move forward while not feeling listened to. This is not captured at all or identified in current narratives, of course. As you can track or categorise ‘progress’ in different ways (e.g., hospital criteria) identifying it becomes even more complex. We discussed how CRIS can inform better patient care through its use as a research database. But when we talk to service users, a lot of them are dissatisfied with the patient care throughout their journey. Maybe they have a good clinician at some point, but the negative experiences of the journey are still there. If we could collect people’s narratives and distil information out of that, it would ensure more consistency. The group expressed its appreciation for the work we are doing on highlighting the patient narratives, which may help to give voice to a part of the service user experience that is largely in the shadows. A group member said there were lots of positive things going on in the South London and Maudsley NHS Trust where patients can express themselves through different media, but this is not always captured or put into the record. If it was captured, it could improve the patient’s wellbeing and also have benefits for other patients. If things were documented differently, what would that look like? The group discussed how there had to be a fine line between capturing enough information to be heard, but not too much which might risk details being overlooked. The amount of time you have available to talk was deemed important – as a patient, we pick out the key points of the story and the clinician records that, rather than considering the whole story. The group reflected that the whole story should be listened to, rather than just the highlights. What is more important: to have a lot of data of our narrative which encapsulates the whole story but may have errors, or to have a shorter snapshot which may be more accurate? A group member said the latter was best, as nothing is worse than misinformation recorded in the record which can then follow you throughout life. If one statement is put on someone’s record, others take it at face value. Having it redacted is technically possible but could be a very difficult and lengthy process. Often the statement will be edited rather than removed. This said a lot about power and control of the narrative. These errors might not be malicious and come from a misunderstanding, but they follow us and means it is our word against theirs. Unless we our record or happen to find out, we might not know what misunderstandings/gaps are in our narrative. A comments section in the record could help with this. Insightful, warm and eye-opening. We visited the group with the intention of unpicking the layers that make a good patient narrative; the small and large details that could contribute to a better representation of what it is that we go through as service users. Suffice to say that our questions felt unimportant and secondary as a poignant issue was brought to light. How can you capture or facilitate a better patient narrative when so many people feel that their fragmented experience has stripped them of the chance to have any narrative at all? AUTHOR: ANNA |

BUILDING HEALTHCARE AI FOR THE REAL WORLDSPEAKER: TOM DYER, BEHOLD.AIA really interesting talk thinking about AI vs. Humans and computers replicating and replacing human processes.

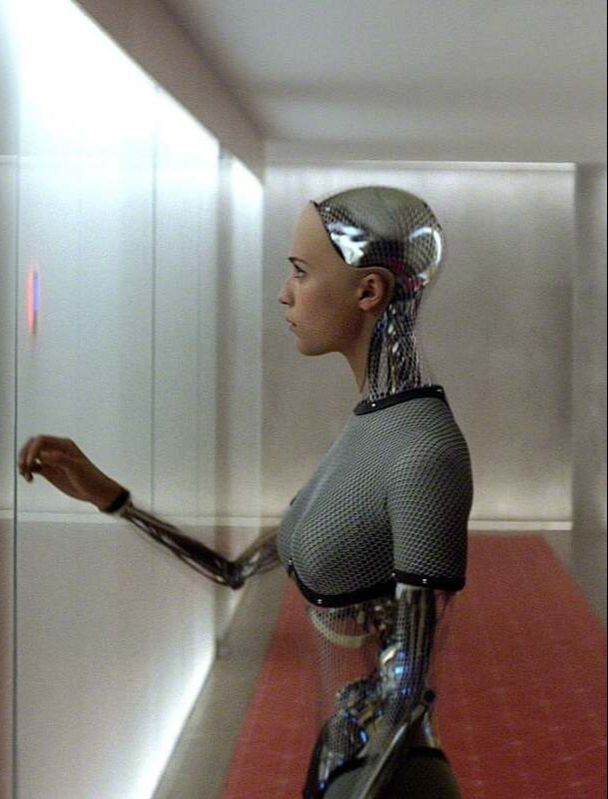

In research, the outcome is often measured as human performance vs. AI, so essentially can it be proven that AI is as good or better than humans? Dyer demonstrated that the combination of AI plus human performance always gives better results than either AI or humans can produce alone. The question is how can we work with AI to improve existing processes and complement human skills? Dyer spoke of the narrative of AI in the media: The reoccurring theme of robots being a threat to human beings, they will take our jobs and eventually threaten our very existence by replacing us. Robots are seen as a threat to human beings - we are portrayed as being weaker. Dyer framed things differently, he spoke of AI cooperating with humans, complimenting our skills, being used as a second opinion / cross reference. In terms of diagnosis, AI is easily trained to determine severity of symptoms, and easily and accurately decide if a patient needs either immediate treatment, or no treatment at all. This means that the workload of a clinician can be significantly decreased and more time can be spent on more complex cases that need further analysis. The crucial part of the AI puzzle which is yet to be deciphered, is if the machine sees something unfamiliar. Instead of it saying "I'm not sure", it might automatically place something in its nearest category. These are the instances where we see how vital the clinicians role is and how irreplaceable they are. Imagine though, in a clinical setting, AI taking on the tasks that involve memorising and recalling information (basically what we would define as the 'knowledge" involved), this would mean that clinicians would have more time to engage other important skills such as compassion, empathy and understanding to make a fuller, more rounded diagnosis. This resonated with me and my thoughts on our project. Could this lead to more of the patient narrative being heard and given value in the clinical setting? By allowing better resources for a clinician to utalise their human skills (e.g. compassion), richer and more valuable information might be drawn form from the patient, helping the clinician to make a better assessment. At the same time AI could capture the patient narrative and analyse it using its knowledge. Together, this would provide a more rounded picture of the patient. My mental wellbeing is severely effected by feeling misunderstood. Simply feeling heard is in itself medicine for me. AUTHOR: SARAH |

AUTHOR

Here you will find blog posts by both Anna and Sarah

ARCHIVES

June 2021

May 2021

April 2021

March 2021

February 2021

January 2021

December 2020

CATEGORIES

All

ACCESSIBILITY

ADVANCED DIRECTIVE

AGENCY

AI

ANNA

ARTWORK

AUDIO

BETH

BETHLEM GALLERY

COLLABORATION

COMPASSION

DECONSTRUCT

DYSLEXIA

EHR

GRAPHIC DESIGN

HEARING

HISTORY

IMAGES

KCL

LABANOTATION

LANGUAGE

LANGUAGE AS SYMBOLS

LISTENING

MEDTECH

NARRATIVE

NICK

PERFORMANCE

PODCAST

POETRY

POWER

PRINTMAKING

RECONSTRUCT

RECORDS

SARAH

SPEAKING

STORY

SUE

VISUAL COMMUNICATION

RSS Feed

RSS Feed